The World Wide Web is the most visible part of the Internet consisting of a very large collection of pages. We all know that the web is big, but: how big is it really, and how big will it become?

Means of Expression

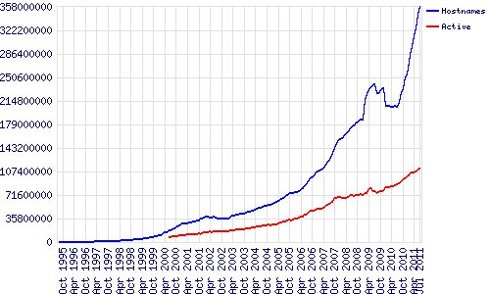

We can express the size of the web in either a number of pages or a number of sites. The number of sites has been tracked by Netcraft for many years. Initially their estimate was based solely on the number of registered sites. However, at some point it became popular to register domain names without actually using them (for resale purposes). So, they adjusted their methodology to track the number of ‘active’ sites. Interestingly, the total number of sites seems to rise exponentially, while development of the number of active sites increases more slowly and is much closer to linearity. This can be seen in the image below:

I conducted a simple linear regression in July 2009 on the Netcraft data available back then: 80 million active sites. This yielded an estimate of about 100 million active sites for January 2013. However, as we can see in this graph: that point has already been surpassed, since there are nearly 110 million active sites as of July 2011. Hence, a more accurate estimate for January 2013 is around 125 million active sites: more than 1.5 times as many as in July 2009!

Another Perspective

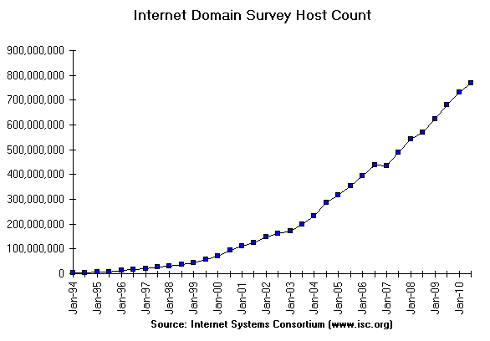

The ISC Domain Survey attempts to count the same as the blue ‘host names’ line in the Netcraft Survey. The gives an estimate of about 800 million for July 2010 which is significantly higher than that of Netcraft for the same period: 200 million. Some attribute this to methodological differences. Nevertheless, the exponential shape of the line is similar to that of Netcraft, except for the weird drop and stagnation around October 2009.

Users as Starting Point

More than a quarter of the world population has access to Internet: as of March 2011 there are about 2 billion Internet users and 7 billion people on the planet. This number is expected to increase, albeit I suspect more slowly than before, since deployment in developing countries will be somewhat slower. There is of course a connection between the number of Internet users and the number of web pages. The numbers suggest that in the present situation there is about 1 active website for every 16 Internet users.

All of this gives us no information yet about the actual number of web pages. Based on data from 2005 Boutell estimates this to be 273 pages per website. However, their methodology is questionable. Google claimed to have passed the one trillion unique URL’s in mid-2008. Applying the same methodology this would mean that each active host would have about unique URL’s on average which seems very high to me. However, assuming this number includes deep links, those with HTTP GET parameters in the URL, already makes it somewhat more realistic.

An on-line answer

One site based on a master’s thesis gives a daily estimate of the size of the indexed world wide web: the part that can be found by search engines. It claims that there are about 17.83 billion indexed web pages. Given this number there are about web pages per active host on average. Keep in mind that these statistics follow a long tail distribution: a few big hosts contain a large number of pages and many small hosts have only very few pages.

Conclusion

If the size of the indexed web grows proportionally with the number of active sites, then there will be nearly 20 billion1 web pages in January 2013. Remember this is only the visible web: the part that search engines can see. The deep web, the part not indexed by search engines, may well be over 55 times2 bigger than this in 2013: about 1.2 trillion pages.

This entire methodology still relies on static web pages and dynamic web pages that work via HTTP GET. The AJAX methodology: a web page that retrieves content and updates itself dynamically without reloading, makes the situation even more complex. What counts as a page in such a set-up? Any URL can have many instantiations that depend not solely on time, but on the user’s characteristics and preferences.

So, this question is going to become increasingly hard to answer because new technologies make the meaning of ‘page’ and ‘site’ much less well defined.

Notes

1)

2)